Note: I publish here the brief that Matthew Plummer-Fernandez (a.k.a. Algopop) sent me before the workshop he’ll lead next week (17-21.11) with Media & Interaction Design students from 2nd and 3rd year Ba at the ECAL.

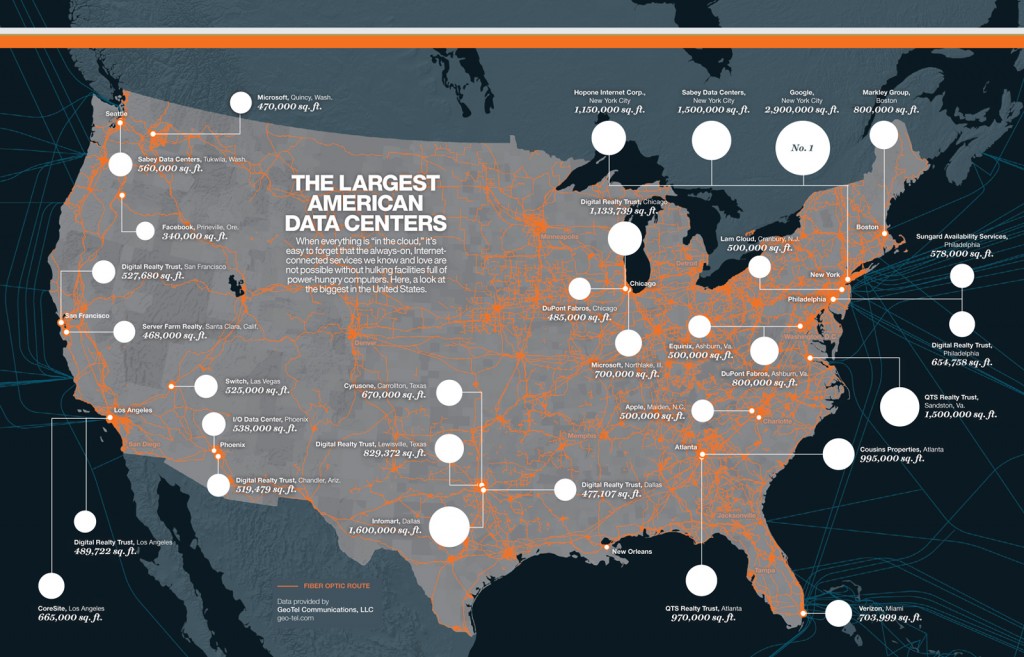

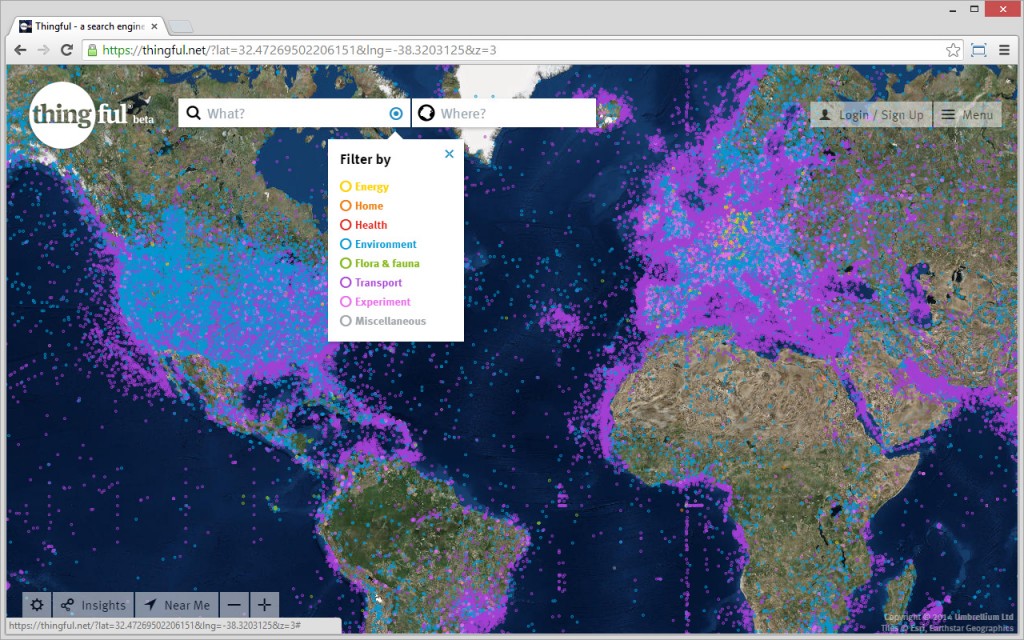

This workshop will take place in the frame of the I&IC research project, for which we had the occasion to exchange together prior to the workshop. It will investigate the idea of very low power computing, situated processing, data sensing/storage and automatized data treatment (“bots”) that could be highly distributed into everyday life objects or situations. While doing so, the project will undoubtedly address the idea of “networked objects”, which due to the low capacities of their computing parts will become major consumers of cloud based services (computing power, storage). Yet, following the hypothesis of the research, what kind of non-standard networked objects/situations based on what king of decentralized, personal cloud architecture?

The subject of this workshop explains some recent posts that could serve as resources or tools for this workshop, as the students will work around personal “bots” that will gather, process, host and expose data.

Stay tuned for more!

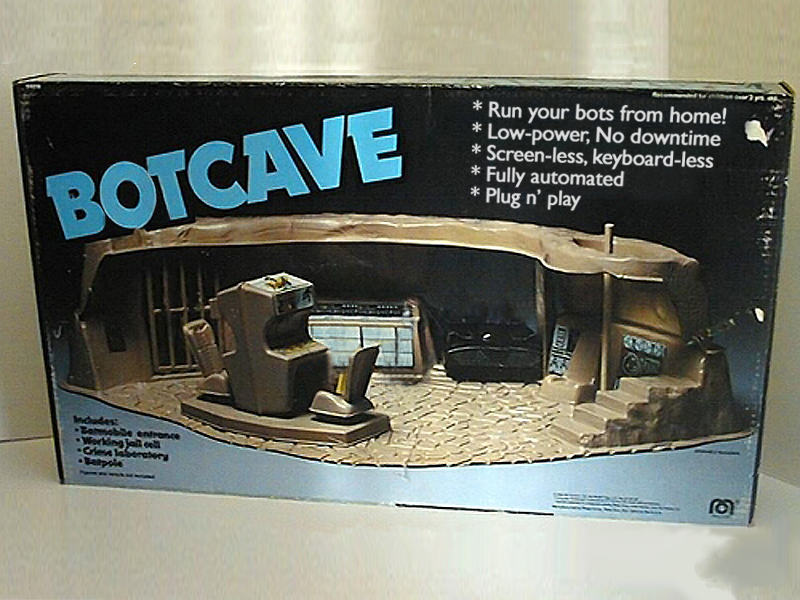

Botcaves

Algorithmic and autonomous software agents known as bots are increasingly participating in everyday life. Bots can potentially gather data from both physical and digital activity, store and share data in the ‘cloud’, and develop ways to communicate and learn from their databases. In essence bots can animate data, making it useful, interactive, visual or legible. Bots although software-based require hardware from which to run from, and it is this underexplored crossover between the physical and digital presence of bots that this workshop investigates.

You will be asked to design a physical ‘housing’ or ‘interface’, either bespoke or hacked from existing objects, for your personal bots to run from. These botcaves would be present in the home, workspace or other, permitting novel interactions between the digital and physical environments that these bots inhabit.

Raspberry Pis, template bot code, APIs, cloud storage, existing services (Twitter, IFTTT, etc) and physical elements (sensors, lights, cameras, etc) may be used in the workshop.

Bio

British/ Colombian Artist and Designer Matthew Plummer-Fernandez makes work that critically and playfully examines sociocultural entanglements with technologies. His current interests span algorithms, bots, automation, copyright, 3D files and file-sharing. He was awarded a Prix Ars Electronica Award of Distinction for the project Disarming Corruptor; an app for disguising 3D Print files as glitched artefacts. He is also known for his computational approach to aesthetics translated into physical sculpture.

For research purposes he runs Algopop, a popular tumblr that documents the emergence of algorithms in everyday life as well as the artists that respond to this context in their work. This has become the starting point to a practice-based PhD funded by the AHRC at Goldsmiths, University of London, where he is also a research associate at the Interaction Research Studio and a visiting tutor. He holds a BEng in Computer Aided Mechanical Engineering from Kings College London and an MA in Design Products from the Royal College of Art.